AUGMENTED REALITY SANDBOX

For a fun project to spruce up our office and interact with our local STEAM (Science, Technology, Engineering, Arts and Math) school programs, we decided to build our own Augmented Reality Sandbox. Originally developed by researchers at UC Davis, the open source AR Sandbox lets people sculpt mountains, canyons and rivers, then fill them with water or even create erupting volcanoes. The UCLA device was built by Glesener and others at the Modeling and Educational Demonstrations Laboratory in the Department of Earth, Planetary, and Space Sciences, using off-the-shelf parts and regular playground sand… but after calling our local woodworking friend Kyle Jenkins, we decided to give our container a modern look that would match our office.

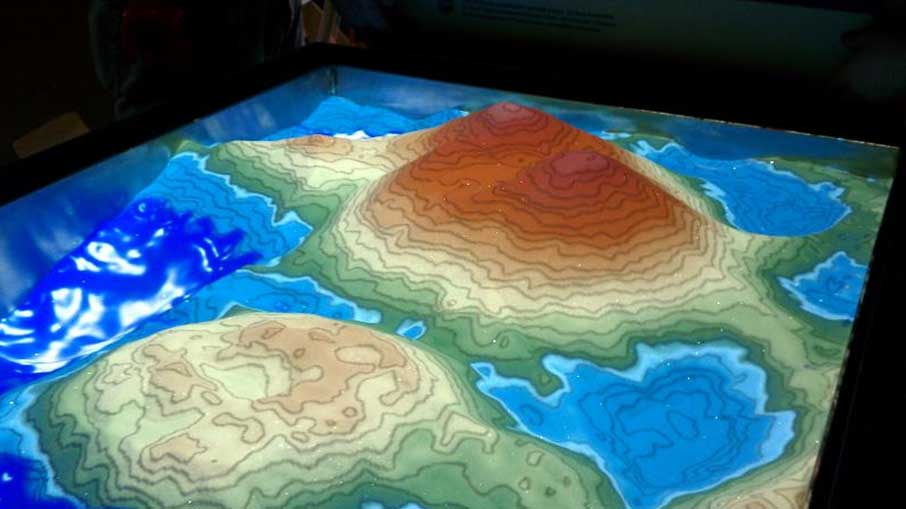

Any shape made in the sandbox is detected by an Xbox Kinect sensor and processed via raw depth frames that arrive from the Kinect camera at 30 frames per second and are fed into a statistical evaluation filter with a fixed configurable per-pixel buffer size (currently defaulting to 30 frames, corresponding to 1 second delay), which serves the triple purpose of filtering out moving objects such as hands or tools, reducing the noise inherent in the Kinect’s depth data stream, and filling in missing data in the depth stream. The resulting topographic surface is then rendered from the point of view of the data projector suspended above the sandbox, with the effect that the projected topography exactly matches the real sand topography. The software uses a combination of several GLSL shaders to color the surface by elevation using customizable color maps and to add real-time topographic contour lines.

At the same time, a water flow simulation based on the Saint-Venant set of shallow water equations, which are a depth-integrated version of the set of Navier-Stokes equations governing fluid flow, is run in the background using another set of GLSL shaders. The simulation is an explicit second-order accurate time evolution of the hyperbolic system of partial differential equations, using the virtual sand surface as boundary conditions. The implementation of this method follows the paper “a second-order well-balanced positivity preserving central-upwind scheme for the Saint-Venant system” by A. Kurganov and G. Petrova, using a simple viscosity term, open boundary conditions at the edges of the grid, and a second-order strong stability-preserving Runge-Kutta temporal integration step. The simulation is run such that the water flows exactly at real speed assuming a 1:100 scale factor, unless turbulence in the flow forces too many integration steps for the driving graphics card to handle.

Essentially, this crazy AR sandbox allows people to literally move mountains with their hands. Check it out in the video above.

By utilizing common components and a little ingenuity we built an incredibly responsive program capable of not only reading changes made in the sand, but also reacting to them in real time. By simply altering the layout of the sandbox, people can create towering mountains and volcanos, or water-filled valleys and rivers. When a person creates valleys and peaks, the Kinect sensor quickly detects the modifications and applies real-time topographical changes. Moreover, our recent iterations of the software allows people to create falling rain onto the map by just raising their hands over the sandbox. As it falls, the rain erodes some of the landscape and pools into pits and valleys.